Last year I came across an article on serverbuilds.net with regard to building a high capacity DAS for Unraid. As the previous article on network reappraisal, I was looking for a more efficient way if using my drives, this was the inspiration. Equipment wise, I already had the case, drive cages (some were purchased for Janus 2)and a Corsair PSU from an old gaming system, plus a whole load of drives.

Some additional items were required to get this up and running..

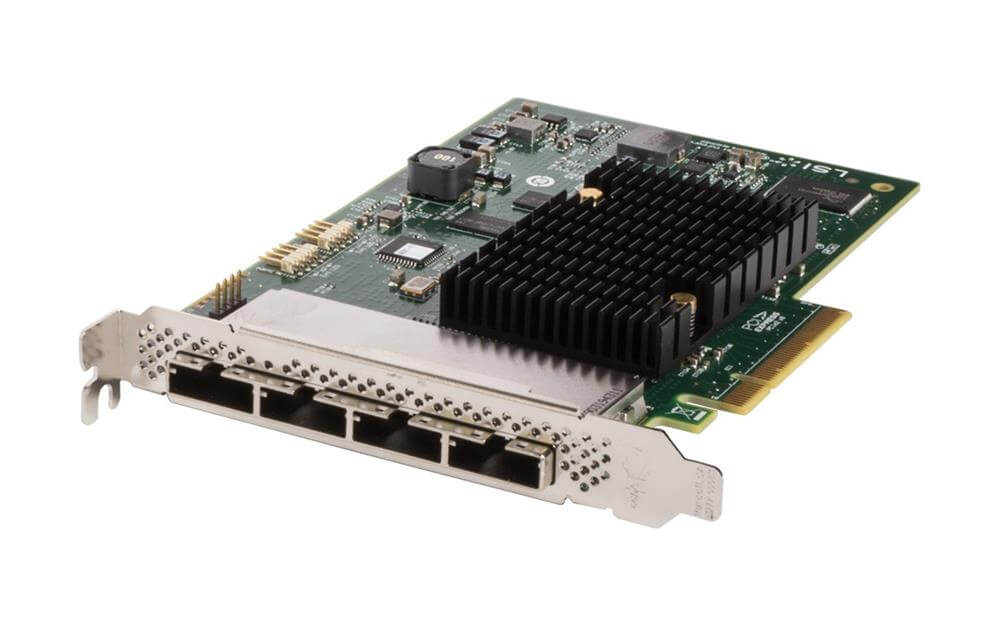

- 1 x LSI 9201-16e SAS HBA card £50

- 4 x SFF-8088 to 4 x SATA 2 metre breakout cables £80

- 1 x 24 Pin PSU tester £10

- 2 x SATA to 4 SATA Splitter power cable £20

- 2 x Molex to 5 x 3 Pin connector for fans £20

- 2 x 140mm Corsair Red LED fans £25

Total additional cost approx. £200

The key to this project is the LSI 9201-16e SAS HBA card which enables connection of up to 16 SATA drives in an external enclosure using the 4 x SFF-8088 breakout cables, 4 x SATA drives per cable. The 24 Pin PSU tester connects to the 24 Pin power cable enabling power on without the motherboard simply by using the the ON/OFF switch on the rear of the unit. The PSU, which is from 2012, came with 2 x 4 SATA power cables and 2 x 2 SATA cables, the purchase of the SATA splitters would then allow for the full 16 drives planed. More modern PSU’s all come with 4 x 4 SATA power cables. Molex to 3 Pin splitters will provide power for the fans of which there are already 2 x 140mm fans in the front and 1 x 140mm on the rear. Additional fans in the form of the 140mm Corsair Red LEDs were added to the top of the case. The drives spin down when not in use so the PSU is only providing power for the fans.

The DAS must be left powered on at all times when Ultron is active otherwise the drives will drop out of the array destroying parity. Both machines are on a 500 watt APC Smart UPS each, Ultron draws much more power than DAS does and so in any power failure situation, Ultron will shut down long before DAS runs out of juice. Even at full load DAS draws about 50 watts vs Ultron's idle 180 watts. It’s possible to link the PSU’s together so they both turn on from the server case switch but this involves sourcing 24 pin splitters and such, another consideration would be a flimsy connection between the two cases. I may do this mod in a future project but it's unnecessary for now.

Moving on to the subject of cooling. The forward stack remains cool with drives ranging between 20c - 25c under full load however the after stack averages a few degrees more from about 24c - 28c due to the decreased airflow. To combat this I came across two GPU fan ducts that came from some old Cooler Master cases designed to fit 120 mm fans. The plan will be to stack these vertically blowing on the after stack then build some shrouds using plasticard around to direct the airflow. I'll probably buy another cage for the aft stack to shunt the drives up one cage height. This will give increased airflow at the bottom of the stack. Still a WIP though as it might not be needed, I’ll see what happens when the summer comes.

10 drives were installed at first, all data was transferred off the last 10 bay Qnap via LAN using Midnight Commander. Syncthing wouldn't work as it bypassed the way Unraid splits up the data filling one drive only. The remaining 6 drives were added to the array once this was complete along with the cache pool drives.